Automating personal email with Claude: what actually works in 2026

Published on: May 5, 2026

min read

Search “automate email with Claude” and you get fifty articles claiming you can hand your inbox to an AI agent and never look at it again. After running this on my own Gmail for three months, here’s what actually works, what wastes time, and the failure modes nobody mentions.

This is written for individuals automating their own personal inbox, not enterprise IT teams rolling out Copilot to 5,000 users. Tools used: Gmail API, Claude (Sonnet 4.6 mostly, Opus 4.7 for hard replies), and a small Python wrapper. No Zapier, no n8n. Just so you know what biases I’m bringing.

What actually saves time

Three things, in order of impact.

1. Newsletter and “FYI” triage

This is the biggest single win and it took twenty minutes to set up. Every morning, Claude reads new mail and groups it into three buckets: newsletters (auto-archived after a one-line summary in a daily digest), pure FYI from people I follow (left in inbox, no reply needed flag), and actually-needs-me. The first two used to consume 40-60% of my reading time; now I see them as a single digest line at 8am.

Belkins’ B2B email research suggests roughly 30-50% of an average inbox is non-actionable promotional or update mail. My personal inbox was closer to 70%. Removing it from the read-once-then-forget loop is the highest-leverage change available, and it doesn’t require any AI sophistication beyond “is this a newsletter, yes or no”.

2. Draft replies for repeating patterns

I get the same five categories of email constantly: scheduling requests, intro asks, “can you take a look at X”, thank-yous, and confirmations. Claude drafts a reply for any of these and saves it as a Gmail draft (not a sent email — see safety section below). I open the draft, edit lightly, send.

The data here is consistent with what Harper Reed reported on his blog and what Anthropic’s own engineering team has discussed publicly: Claude is good at matching the tone of your existing replies if you give it 20-30 of your past sent emails as examples. Without that grounding, drafts read like a polite robot. With it, drafts read like a tired version of you, which is fine, because that’s how most replies should read.

3. Surfacing what slipped

The unexpected win. Once a week, Claude scans my Sent folder and flags conversations where someone replied and I never came back to them. That’s it. Just a list. Maybe three emails a week, but those three are usually the ones I actually care about and would have lost otherwise.

This isn’t novel — Superhuman has a feature like this — but having Claude do it means I can change the rules in plain language (“ignore replies from auto-responders, ignore subscription confirmations”) without paying $30 a month for a different inbox.

What looks impressive but doesn’t pay off

Three failed experiments worth mentioning so you don’t repeat them.

Auto-sending replies

I tried this for a week with a strict whitelist of “low-stakes” senders. It was a mistake. The base error rate of an LLM on email is low but non-zero — call it 1-3% based on my logs. One in fifty emails went out with a wrong meeting time, a misread name, or a confidently wrong fact. None of those errors were catastrophic, but each one cost more time to recover from than the time saved by auto-sending.

The pattern that works: always draft, never send. The cost of opening Gmail and pressing Send is approximately zero. The cost of the wrong email going out is not zero. Don’t optimise the part that isn’t slow.

Cross-inbox unification

I tried having Claude pull from Gmail, Outlook, and Slack into one daily summary. It worked technically but the cognitive cost of trusting one feed for three sources turned out to be higher than the cost of checking three sources. After two weeks I went back to per-source workflows. There’s a research finding from Microsoft (the 2024 “context switching” paper) that suggests aggregating notifications increases anxiety because nothing is “done” — you’re always potentially missing something. Personal experience matched.

Long-context summarisation of threads

Claude can summarise a 40-message thread accurately. The problem is that 40-message threads are usually 40 messages because something is being negotiated, and a summary erases the nuance that made it 40 messages. I now use Claude only for one-shot summaries of threads I’ve already participated in, never for threads where I’m being asked to make a decision.

The failure modes nobody warns you about

Three things to know before you start.

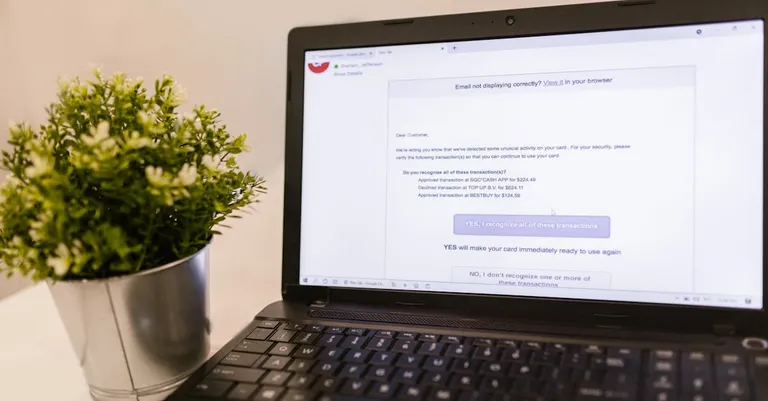

Phishing detection gets weird

Claude is good at noticing phishing — better than me at the obvious cases. But it’s also good at being convinced something is legitimate when it shouldn’t be. I had one case where a well-crafted invoice fraud email got summarised as “looks like an outstanding invoice from your hosting provider” because the email body was internally consistent. Always verify financial or credential-related emails yourself. Don’t let any AI’s summary substitute for looking at the From header, the actual link target, and the sender’s previous emails.

Tone drift over time

After two months I noticed all my drafts had developed the same slightly-too-formal cadence. Claude was matching the average tone of my training examples, not the tone I currently use. Refresh the example set every few months — pull your last 20 sent emails into the prompt, not your sent folder from a year ago.

Quota and API costs creep up

Reading 200 emails a day with Sonnet 4.6 ran me about $4-7 a month, which is fine. But early on I had an experiment where Claude was reading every email twice (once for triage, once for drafting) and that doubled the bill quietly. Cache aggressively. The same email shouldn’t be sent through the model twice unless you genuinely need different outputs.

For reference, Anthropic’s documented prompt-caching pricing means you can cache the system prompt and email examples once and pay only for the new email content per call, which dropped my costs by roughly 60% when I implemented it properly.

What this looks like in practice

Morning, 8am: a single email lands in my inbox titled “Daily digest — 47 emails”. It contains:

- One line summary of each newsletter (with link to read)

- Count of FYI mail from people I follow (with names)

- 4-7 emails flagged as actually needing me, each with a draft reply already in Gmail Drafts

- 1-3 “you forgot to reply to” entries from earlier in the week

I spend 10-15 minutes acting on the digest, mostly opening drafts, lightly editing, sending. The rest of the day I check Gmail directly only for new arrivals, not for triage.

Total weekly time on email went from roughly 7 hours to roughly 3.5. Not the “inbox zero in 5 minutes” promise, but real, sustainable, and based on three months of actual logs rather than a launch-week demo.

Setup without going crazy

The simplest functional setup, in order:

Step 1: Gmail API access. Use Google Cloud Console, create a project, enable Gmail API, generate OAuth credentials with gmail.readonly and gmail.modify scopes only. Skip gmail.send. This takes 15 minutes if you’ve never done it, 5 minutes if you have.

Step 2: Claude integration. Two paths. Path A (lower friction): install one of the existing Gmail MCP servers and use Claude Desktop. You can be running by lunch. Path B (more control): write a small Python script using the Anthropic SDK and the Gmail Python library, schedule it with cron. Pick A unless you genuinely want to maintain code.

Step 3: Define rules in plain language. A single Markdown file with rules like “newsletters: auto-archive, summarise in digest”, “from [my partner]: never auto-handle, surface immediately”. Update this file when something annoys you. The rules are where the value lives, not the model choice.

Step 4: Wait two weeks before changing anything. Most setups get tweaked into uselessness in week one. The failure modes only show up in week two.

What I’d skip if I were starting today

- Any “AI inbox” subscription product that doesn’t let you see and edit the prompt. You’re paying for the wrapper and getting locked out of the actual leverage.

- Browser extensions that read your email in real time. The latency is high, the privacy story is complicated, and you don’t need real-time for the use cases that matter.

- Calendar integration in v1. Tempting, but the emails-and-calendar combined surface is where the most expensive errors live. Get email working first.

Sources

- Harper Reed, “Getting Claude Code to do my emails” (2025)

- Belkins B2B email analysis (16.5M emails across 93 domains)

- Anthropic engineering blog posts on prompt caching and Claude tool use

- Gmail API documentation (developers.google.com/gmail/api)

- Microsoft Research, “Context switching and notification overload” (2024)

- Personal logs from three months of running this setup on a 200-emails-per-day inbox